My essay yesterday was about the mechanics of how product design is changing—designing in code, orchestrating AI agents, collapsing the Figma-to-production handoff. That piece got into specifics. This piece by Pavel Bukengolts, writing for UX Magazine, is about the mindset:

AI is changing the how — the tools, the workflows, the speed. But the why of UX? That’s timeless.

Bukengolts is right. UX as a discipline isn’t going anywhere. But I worry that articles like this—well-intentioned and directionally correct—give designers permission to keep doing exactly what they’re doing now. “Sharpen your critical thinking” and “be the conscience in the room” is good advice. It’s also the kind of advice that lets you nod along without changing anything about your Tuesday.

The article lists the skills designers need: critical thinking, systems thinking, AI literacy, ethical awareness, strategic communication. All valid. But none of that addresses what the actual production work looks like six months from now. Bukengolts again:

In a world where AI does the work, your value is knowing why it matters and who it affects.

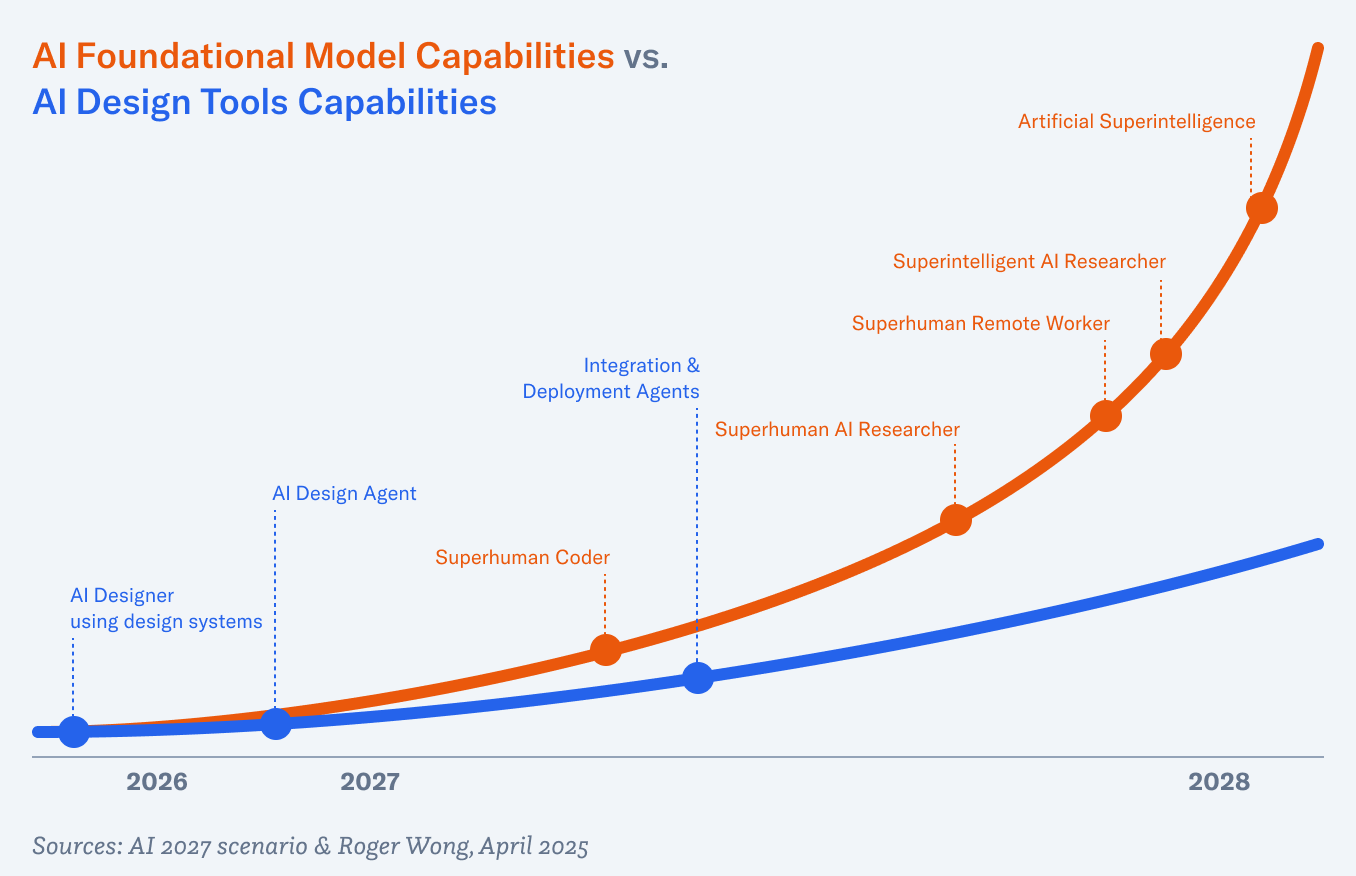

I agree with this in principle. The problem is the gap between “UX matters” and “your current UX role is secure.” Those are very different statements. UX will absolutely matter in an AI-powered world—someone has to shape the experience, evaluate whether it actually works for people, catch the things the model gets wrong. But the number of people doing that work, and what the job requires of them, is changing fast. I wrote in my essay that junior designers who can’t critically assess AI-generated work will find their roles shrinking fast. The skill floor is rising. Saying “stay curious and principled” isn’t wrong, but it’s not enough.

The piece closes with reassurance:

Yes, this moment is big. Yes, you’ll need to adapt. But no, you are not obsolete.

I’d feel better about that line if the article spent more time on how to adapt—not in terms of thinking skills, but in terms of the actual work. Learn to design in code. Get comfortable directing AI agents. Understand your design system well enough to make it machine-readable. Those are the specific steps that will separate designers who thrive from designers who got the mindset right but missed the shift happening underneath them.

Design Smarter: Future-Proof Your UX Career in the Age of AI

Is UX still a thing? AI is rising fast, but UX isn’t disappearing. It’s evolving. The big shift isn’t just tools, it’s how we think: critical thinking to spot gaps, systems thinking to map complexity, and AI literacy to understand capabilities without pretending we build it all. Empathy and ethics become the edge: designers must ask who’s affected, what’s left out, and what unintended consequences might arise. In practice, we translate data and research into a story that matters, bridging users, business, and tech, with strategic communication that keeps everyone aligned. In an AI-powered world, human judgment, why it matters, and to whom, stays central. Stay curious, sharp, and principled.